Everything you've always wanted to know about Kubernetes observability

Get the scoop on the why and how of Kubernetes observability, from logging strategies to telemetry points. Learn how it can help make your DevOps efforts successful.

When it comes to managing large-scale applications in a dynamic environment, Kubernetes has become the go-to platform for modern enterprises.

By abstracting away the underlying infrastructure and providing a unified interface for managing, deploying, and scaling containerized applications, Kubernetes environment has enabled businesses to innovate at a much faster pace.

However, with this level of complexity comes the challenge of visibility into the various components of the system. This is where Kubernetes observability plays a critical role, allowing you to gain insights into the health, performance, and state of your Kubernetes clusters.

In this blog, we will explore the fundamentals of Kubernetes observability and how it can help you better understand your system.

What is Kubernetes observability and why is it important for cloud-native architectures?

Kubernetes observability refers to the ability to monitor, measure, and analyze Kubernetes-based systems and applications through a range of metrics, logs, and events.

With the emergence of cloud-native architectures, Kubernetes observability has become a crucial aspect of ensuring the reliability and performance of these systems. Kubernetes is designed to be highly scalable and dynamic, resulting in complex environments that are difficult to monitor and manage manually.

Observability provides significant benefits in understanding system behavior, identifying errors or bottlenecks, and ultimately improving system performance and resilience. It also allows for better visualization of the system, enabling effective decision-making and enabling teams to quickly respond to issues before they escalate.

Effective observability of Kubernetes-based applications is critical in ensuring the success of cloud-native architectures, which are increasingly being adopted across various industries to meet the demands of modern-day computing.

Differentiating between traditional monitoring tools and Kubernetes observability solutions

Traditional monitoring tools have been around for a long time and have proven to be effective in identifying and addressing issues within a given infrastructure.

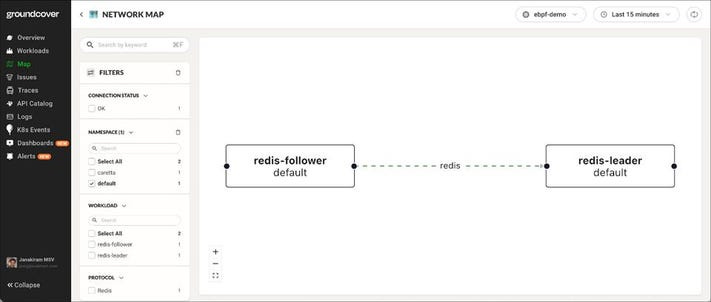

However, as organizations increasingly adopt Kubernetes as their container orchestration platform, monitoring these dynamic environments requires a new approach. Kubernetes observability solutions provide comprehensive insights into the health, performance, and behavior of containerized applications running on the clusters. They leverage instrumentation, tracing, logging, and other advanced techniques to provide a real-time, end-to-end view of the entire system.

Using Kubernetes observability solutions, operators and developers can easily monitor and troubleshoot issues across all layers of the stack, including containers, pods, nodes, and networks.

Things to consider when selecting a Kubernetes observability tool

In selecting a Kubernetes observability tool, there are several important factors to consider. First and foremost is the level of visibility it provides.

A good tool should be able to monitor the entire Kubernetes cluster, including its applications and underlying infrastructure, and provide real-time insights into their performance and behavior. It should be able to detect any anomalies, errors, or system failures and provide alerts and notifications to the development teams.

Another important factor to consider is the level of scalability and flexibility of the tool. Kubernetes applications are highly dynamic and constantly changing, so the monitoring solution should be able to keep up with this pace and adapt accordingly. It should support multiple data formats and be able to integrate with various Kubernetes add-ons and external tools.

A good observability tool should strike a balance between functionality, ease of use, and affordability, providing the organization with a comprehensive view of its Kubernetes environment while also staying within budget constraints.

What Is The Difference Between Observability And Monitoring In DevOps?

DevOps is all about efficiency and making sure that everything runs smoothly.

Therefore, observability and monitoring are two concepts that are critical to the success of DevOps. Although the terms are often used interchangeably, there is a distinct difference between them. Monitoring refers to the collection of metrics and logs that are used to determine the status of a system or application.

Observability, on the other hand, is the ability to understand why the system or application is behaving in a particular way. In other words, while monitoring focuses on what is happening, observability focuses on why it is happening.

This subtle difference can be the key to resolving issues quickly and ensuring that the overall health of the system remains strong. So, if you want to truly master DevOps, it is important to understand both observability and monitoring and how they work together.

Why Is Kubernetes Observability So Important?

In the world of container orchestration, Kubernetes has emerged as a standard for managing and deploying applications at scale.

However, with the increasing complexity of Kubernetes deployments, observability data has become an important concern for engineers and developers alike. Observability refers to the visibility and insight into the behavior of systems, and in the context of Kubernetes, it means having the ability to monitor and troubleshoot applications and infrastructure. Kubernetes observability is essential because it enables teams to identify issues quickly and efficiently, reduce downtime, and ultimately improve the user experience.

Without a proper observability data strategy, teams risk losing control of their clusters and encountering preventable issues that could have long-term consequences.

Best Kubernetes Observability Practices

1. Centralizing logs: A centralized log management system can be the silver bullet for monitoring Kubernetes environments. Consolidating logs from all the nodes and components in a standardized format can help to spot issues more easily and even predict potential problems that are likely to occur in the future.

2. Metrics collection: Collecting data and displaying metric data about pods, nodes, or the entire cluster, in the way of charts, graphs or dashboards, can provide a clear view of a Kubernetes cluster's performance. This information can help you to easily analyze how the cluster is performing over time and detect any anomalies or identify potential problems.

3. Distributed Tracing: Understanding how your services relate to one another and how they interact can be tricky in complex systems, so distributed tracing can provide valuable insights by showing the path of requests and timing between services. Thus, it can provide observability into the flow of requests between services and identify bottlenecks or slow performance.

4. Alerting and notification: Alerting and notification systems are essential for a healthy Kubernetes observability environment. They can notify the relevant team members of problems as they occur or when certain thresholds have been exceeded. This is crucial, as it can help teams respond immediately to critical issues and avoid downtime.

How to set up effective monitoring and logging for Kubernetes cluster

Monitoring is essential to maintain the health and performance of a K8s cluster.

It helps teams identify and diagnose issues before they impact the system. Logging, on the other hand, enables teams to track application activity and troubleshoot errors. Furthermore, it helps teams identify and analyze trends over time, leading to better decision-making.

To set up effective monitoring and logging for K8s, several tools and practices need to be in place. The following are some crucial steps to follow:

1. Identify the important metrics: The first step is to know what to monitor. Different K8s deployment scenarios may require different metrics to monitor. Some critical metrics include CPU usage, memory utilization, network traffic, and disk I/O.

2. Select an appropriate monitoring tool: Next, choose a monitoring tool that can track the identified metrics. There are various tools available such as Prometheus, Grafana, and Datadog. Prometheeus is the most popular one, which monitors Kubernetes deployments and provides actionable insights.

3. Configure and deploy the monitoring stack: After selecting the monitoring tool, deploy it to the cluster. It's crucial to configure it correctly to capture and store data effectively. Using a stack such as Prometheus and Grafana simplifies the process.

4. Set up logs collection: For logging, teams can use a centralized logging system that collects logs from different sources. Popular tools include Elasticsearch, Fluentd, and Kibana.

5. Install and configure logging agents: Install and configure logging agents such as Fluent Bit or Fluentd on each worker node to forward log data to the centralized logging system.

6. Configure alerts: Configure alerts based on the defined thresholds to notify teams of any anomalies or critical issues.

By identifying critical metrics, selecting appropriate monitoring and logging tools, and configuring them correctly, teams can stay on top of their infrastructure and applications, stay proactive, and avoid costly downtime.

Benefits of using metrics to understand system performance and user behaviour

When it comes to understanding the performance of a system and user behavior, metrics play an essential role.

Gathering quantitative data about how customers are interacting with your product allows you to make informed business decisions, identify areas for improvement, and ultimately boost your bottom line.

By collecting and analyzing data, organizations can set tangible goals and track progress over time. Not only does this help businesses better understand their customers, but it also leads to a more efficient and effective operation overall.

In short, metrics provide insights that help organizations make the best possible decisions about their products and services.

Challenges when troubleshooting errors in a K8s environment and best practices for resolving them quickly

When it comes to troubleshooting errors in a K8s environment, there are a number of challenges that can arise.

One of the biggest hurdles is simply identifying the root cause of the issue, which can often be obscured by the complexity of the Kubernetes architecture.

Additionally, pinpointing the specific component or service that's causing the error can be a real challenge, particularly if your cluster is large and contains many different interconnected pieces. That said, there are a number of best practices that can help you resolve errors quickly and efficiently.

These may include techniques like utilizing log data and metrics to establish a better understanding of system behavior, running automated tests to identify and fix common errors, and performing regular system audits to catch potential problems before they have a chance to cause serious damage.

By following these strategies and staying vigilant in your approach to error resolution, you can help ensure that your K8s environment runs smoothly and reliably over the long term.

Using Kubernetes' metrics and log data

Once you have examined the observed observable performance for your clusters, the next stage is Kubernetes deployment itself.

Although Kubernetes self-cleaning, the configuration you specify is dependent on the configuration that has been specified. By looking at the way the cluster is configured, you will see misconfiguration, for example.

Depending on your expectation the various components of Kubernetes emit log signals to show the functioning system in its own way. These include:

• Kubernetes API server logs: These provide information about most of the activity in the system, including requests to create and delete resources, errors encountered while applying configurations, and so on.

• Kubelet logs: These are responsible for performing actions on behalf of the Kubernetes API server and provide useful insights into the resources that were created, deleted, and updated.

• Kubernetes Network Proxy logs: These provide information about traffic flows between nodes in the cluster.

• Pod or container logs: These are generated by applications running inside of containers or pods and can indicate issues with specific services such as database connections, etc.

Understanding the three pillars of Kubernetes Observability

Observability in Kubernetes refers to the ability to monitor, analyze, and troubleshoot the health and performance of the Kubernetes cluster, its resources, and the applications running on it.

Observability comprises three essential pillars, namely, monitoring, logging, and tracing. Let's dive deeper into each of these pillars.

1. Monitoring: Monitoring in Kubernetes is essential to keep track of the overall health and performance of the cluster and its resources. It involves collecting metrics and aggregating them over time to generate insights into the system's behavior.

2. Logging: Logging pertains to the ability to collect and store the application logs to facilitate debugging and troubleshooting. Kubernetes provides native logging capabilities through its logging stack, which comprises FluentD and Elasticsearch.

3. Tracing: Tracing involves capturing and measuring the requests and responses flowing through distributed systems to identify the performance bottlenecks and errors. Kubernetes provides native tracing capabilities through its tracing stack, which comprises Jaeger. behavior.

By combining the three pillars of observability, teams can gain more visibility into their systems' behavior and address issues proactively.

Kubernetes Observability Challenges: What Can You Expect?

A Kubernetes system usually includes multiple interconnected devices which are often more susceptible to a breakdown.

Moreover, it increases your monitoring needs. A further factor is that these two components are interdependent. In some cases modifying a specific component affects the entire application and its dependencies. Containers and microservices can produce an enormous volume of health information.

Application observability for container and micro service applications is difficult, especially without the use of robust Kubernetes monitoring and analytics tools.

Keeping up with the dynamic nature of Kubernetes clusters

Kubernetes clusters are complex and continually growing.

Containers may increase or decrease with changing supply. Alternatively, the estimated storage capacities can vary depending on the requirements. Kubernetes is a software development framework aimed at automating infrastructure management, but the technology enables an unimaginable combination of resources.

So logging data may differ in some respects from logged data in another instance. In addition to this, the configurations in the Log and the MetricStreams are changing periodically.

Kubernetes observability is essential for ensuring the health and performance of a cluster, its resources, and the applications running on it. It involves three critical pillars — monitoring, logging, and tracing — but comes with several challenges that need to be addressed effectively to gain more visibility into system behavior. To address these

Deep dive into key components of Kubernetes observability, such as Prometheus, Grafana, App Mesh, and more

Kubernetes observability has become a crucial aspect of modern cloud infrastructure management.

Comprehensive Kubernetes observability requires integrating multiple tools, including Prometheus, Grafana, App Mesh, and more. Prometheus is used for collecting metrics from Kubernetes clusters, while Grafana provides a dashboard for visualizing data trends in real-time.

These tools help DevOps teams monitor their Kubernetes clusters' health and maintain optimal performance levels. In addition, Kubernetes observability data also involves using tracing mechanisms, such as Jaeger, for keeping track of microservices' interactions. With observability, IT teams can identify bottlenecks, detect security threats, troubleshoot issues, and optimize resource usage to meet business requirements.

By embracing Kubernetes observability's benefits, IT professionals can improve their organizations' agility, scalability, and overall operational efficiency.

Conclusion:

In today's fast-paced world, Kubernetes has emerged as a critical platform for managing modern applications.

However, with the complexity of the system, managing and gaining visibility into the system can be challenging. Kubernetes observability provides a holistic approach to gaining insights into the system health, performance, and state, empowering you to troubleshoot issues more effectively and optimize your system for optimal performance.

With monitoring, logging, tracing, alerting, and visualization, Kubernetes observability is a comprehensive solution for managing your Kubernetes clusters with confidence.